Exploring the Eight Fallacies of Distributed Computing

Building distributed systems by overcoming common fallacies

Introduction

Distributed systems are inherently complex and hard to build. A cab-booking system such as Uber is made up of thousands of services running on thousands of machines all over the globe. When users launch the app, there are millions of API calls performed in the backend. It is fascinating to see how the user is completely abstracted from the internal complexities and delivered the experience within a blink of an eye.

With the growing complexity, there are high chances of failure. There are many things that are not in the control of Engineers. There could be a power failure in a data centre, natural calamity could destroy a data centre, or hard disks could wear out and stop working. Engineers can never predict what could happen in the future. However, they could build the systems that would be resilient to failures.

While designing & building a system, we all make certain assumptions. Many a times, we have a good judgement and often our hypothesis turns true. But, in certain cases, we come across issues which challenge our assumptions and become important learnings in our career. These assumptions are also known as fallacies. There are eight such fallacies in Distributed Computing. In this article, we will dive deep into each of these fallacies. We will understand what each of the fallacy is, how to overcome it & build a robust distributed system.

Network is Reliable

Before understanding this fallacy, let’s understand the meaning of “Reliable”. Reliability is the property of a system to continue functioning inspite of failures. The components in the system always interact external systems. The network forms the backbone for any system to function smoothly.

We have reliable communication protocols like TCP which guarantee successful delivery of data. However, with the increased complexity there could be multiple ways in which the system could fail.

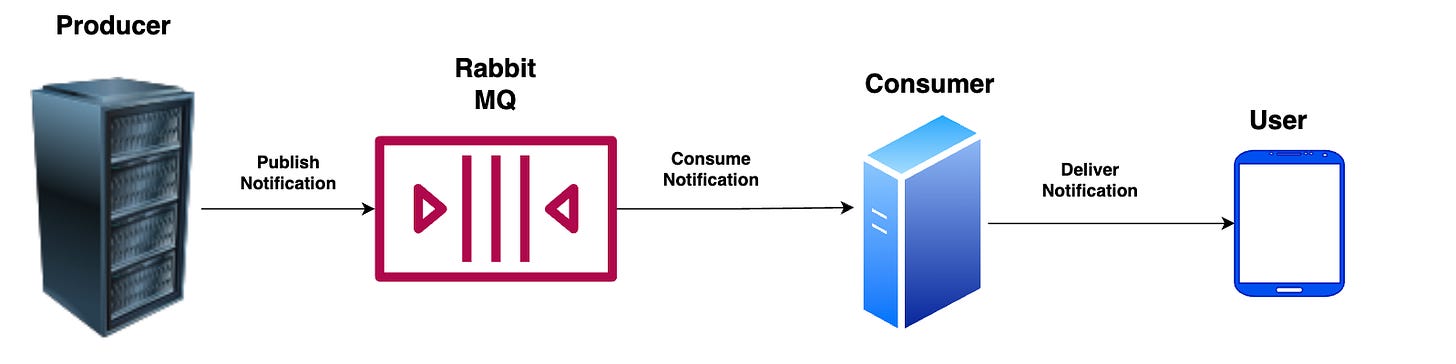

Let’s assume that we want to deliver a notification to the user. Our backend composes of a service which pushes the notification in a queuing service like RabbitMQ. What are the things that can go wrong here ?

Rabbit MQ server can crash due to power failure or application errors.

Rabbit MQ server could process the message and crash just before sending an acknowledgement.

Rabbit MQ server could drop the new connections in case there is a sudden surge in the number of clients.

The above examples are a subset of plethora of things that could go wrong. We often assume that the network is smooth and failures never occur. This assumption culminates in failures while operating the systems in production. Eventually, it has a business impact.

Such kind of failures are inevitable while building distributed systems. There are multiple techniques to overcome these errors. Although, our network isn’t reliable, we can build reliability into our applications.

One of the techniques that is often used is retries. In case the server doesn’t accept the connection, retry 3-5 times and then fail the request. There are multiple open source clients that have default retry mechanisms in-built. Developers can even override the retry mechanisms and customize it as per their needs.

In case the client doesn’t get back an acknowledgement and retries, it could lead to duplicate processing at the server’s end. This could have serious consequences in systems like WhatsApp or Messenger. Users wouldn’t be happy to receiving the same message multiple time from others. In such cases, servers should be idempotent and process the request only once.

Latency is zero

Latency is the total time taken for the data to travel from one machine to another & back to it. It is proportional to the distance between the two machines. For eg:- A message from one computer in SF to another in NY would take 5ms. But, for the same computer in SF, it would take 25ms to send a message to a computer in India.

Latency can be minimised by placing the servers close to each other. Many HFT (High-Frequency Trading) companies place their servers close to the Exchange server’s rack. In HFT, every micro-second matters. However, since people are distributed across the globe, it’s impractical to place everything at one place.

Nowadays, many cloud providers build their data centres in different countries. Companies take advantage of this and deploy their workloads in regions where their users are located. For eg:- An Indian startup like Swiggy would likely deploy their services in IN region than the EU or US region.

Keeping aside the physical distance, latency is also influenced by how the application is built and how the data is modelled in the database. Few years ago, I wrote code that fetched few records from PostgreSQL, & showed them after running some business logic. It worked very well in staging, pre-prod. But it bought down the whole site, once it was deployed to production.

After analysis, I found that there were million record in the production database and there was no index added on the column. The staging and pre-prod databases had few thousand records. Once the index was added, things started working smoothly.

In every other system, that we build, latency matters a lot. We all want to get our things done fast. Latency is a measure of the system’s performance. The lower the latency, the better is the system’s performance.

Bandwidth is infinite

Bandwidth is the amount of data that can be transferred over a network. We generally don’t pay attention to the data that gets sent over the wire. A successful response from the remote server is sufficient to put a smile on our face. Behind the scenes, our data gets converted into a streams of 0s and 1s and travel miles within few milli-seconds.

With the growing internet penetration, the number of users has increased. Simultaneously, we have witnessed a growth in the amount of data. Today, we see lot of unstructured data in the form of videos, audios, images, etc. In the early 2000s, this data was mostly text.

Although, the Internet service providers have increased the bandwidth, there is an upper bound on the bandwidth. Insufficient bandwidth often leads to network congestion, queuing delays, and packet loss.

There are multiple techniques that are used in industry to efficiently utilize the bandwidth. Communication protocols such as Websockets, HTTP 2/3 offer multiplexing that improves bandwidth utilization. Serialization protocols such as json, grpc are used widely and known for their speed & preserving network bandwidth.

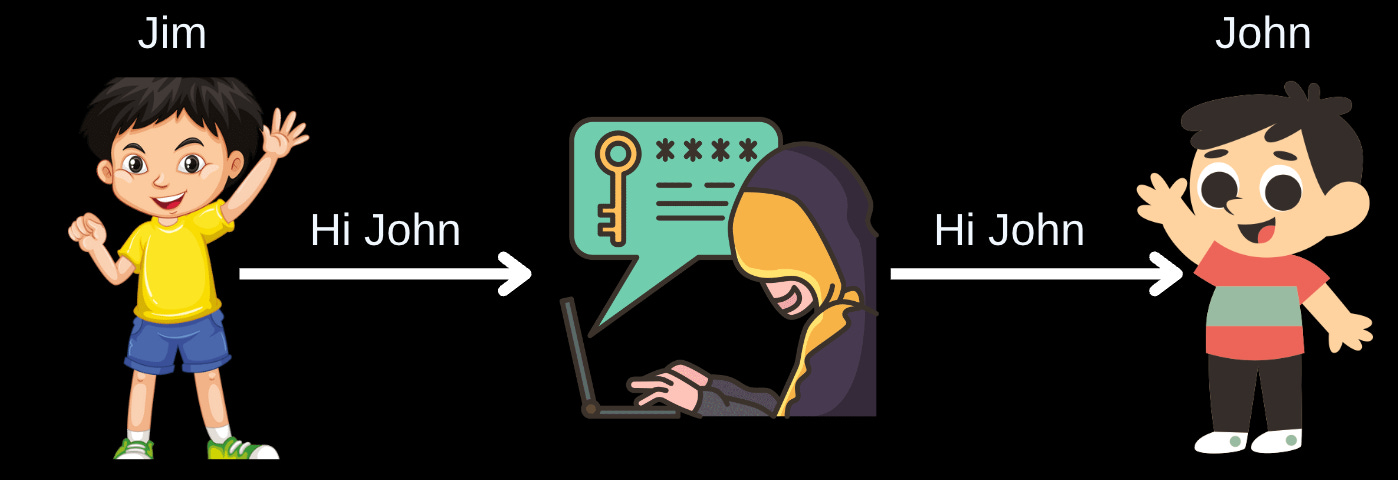

Network is secure

We frequently turn a blind eye to the security aspects of a system. Security is often related to customer’s trust. Once you lose a customer’s trust, it’s very difficult to earn it back.

According to me, security is one of the most important pillars today. We deal with a lot of customer data in today’s world and often hear of data thefts almost every day.

There are lot many tools developed to scan security vulnerabilities in the code base. Moreover, authentication, authorization, and encryption should be inbuilt into the system. Companies also offer crowdsourced bug bounty programs that help them detect critical issues, loopholes and blind spots in their systems.

Topology doesn’t change

In our mental model, we have a picture of a client talking to a load balancer, which forwards the request to a set of servers, which then talk to a database. We assume that this would be the state forever.

The topology or the configuration of our system is never static. It continuously changes. Servers crash, new machines get introduced, old machines are removed, new services are launched to handle increasing load. Network Administrators would deploying new access control rules.

We have to build service talking into account the changes in topology. Container orchestration engines such as Kubernetes have simplified lot many things for the developers. Since a lot of the original Devops is being automated, developers no longer need to care about the topology. Nevertheless, it’s important to identify if there is a single point of failure, and bottlenecks in the system.

There is one Administrator

If you are working on a side-project, you are well aware of the system that you are building. Since, you have built things from the scratch, you know everything about the systems. In the early days of computing, teams often had one or two people. And they were the administrators and knew ins & outs about the system operations.

This doesn’t hold true anymore. We have teams consisting of more than 20 people. It’s impractical for a single person to know everything about all the systems. Also, every team is autonomous and builds & deploys software independently.

While building services, we need to ensure that it is easy to maintain and manage. We also have to make sure that it is easy to troubleshoot and has all the necessary documentation for any new engineer to get up to speed. One of the key aspects of designing a robust system is operational excellence. We have to lay a lot of emphasis on logging, traceability and monitoring.

Transport Cost is Zero

When we transport the data from one place to another, there are lots of hidden costs. When you send a data from one place to another, it travels through the server rack, switches, routers, fibre optic cables, and many other components. There is a cost associated with each component. The larger the network, the more is the associated cost to manage it.

In addition, we have to serialize the data into a stream of bytes and transport it over the wire. At the other end, we deserialize it and convert it into business objects. The serialization and deserialization is often overlooked and has a hidden cost. It is a CPU heavy operation and depending on the payload, it could be time taking operation as well.

When making a choice between the serialization formats, we should use lightweight protocols such as JSON and gRPC over XML based protocols such as SOAP. The transport costs reduce to a great extent if you use JSON or gRPC.

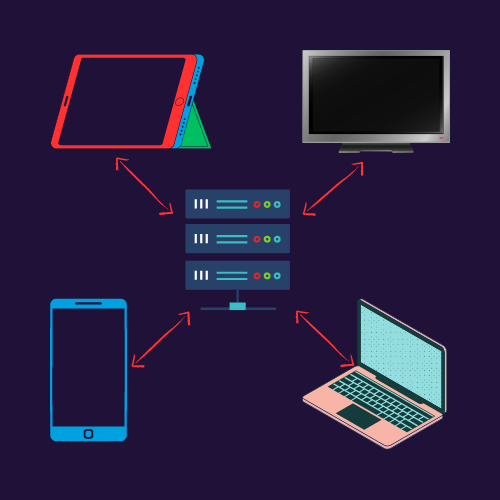

Network is homogenous

Let’s consider our home network which consists of TV, Mobile, Laptop, Tablet Computers, AI assistants such as Alexa, etc. Each device is unique, has a different hardware and runs software that is compatible with it’s hardware. In short, even our home network is not homogenous.

A friend of mine worked in a company where they used to run two versions of the server, one for mobile device and another for browser. This was because they relied on an enterprise protocol which didn’t support mobile devices. So, eventually this created a maintenance overhead and they had to migrate to an industry-standard protocol.

While building systems, it’s important to think about interoperability. We need to ensure that different devices or clients can communicate with the systems that we build. A rule of thumb is to use industry-standard protocols such as HTTP, WebSockets, etc for communication.

Systems built using industry-standard protocols can be easily integrated by different types of devices having different configurations. This simplifies the interoperability of the software that you build.

Conclusion

Building an ideal system that always works is an unattainable goal. There are factors beyond our control that prevent us from achieving perfection. However, when designing and developing systems, it is crucial to consider the ways in which they can fail. This mindset not only helps us avoid the arduous process of debugging and analysis but also enables us to deliver excellent experiences to our customers.

By understanding and addressing the eight fallacies of distributed computing, we can build more resilient and robust systems. It is important to proactively revisit our design choices and continually reconfirm our beliefs throughout the different stages of development. As computing continues to advance, new fallacies may emerge, and it will be interesting to see how the list evolves.

Ultimately, by embracing the challenges and complexities of distributed systems and staying aware of the fallacies, we can strive for better system design, improved performance, and enhanced customer satisfaction.

Thanks for reading the article! Before you go: